Not sure what accessibility changes will have the most benefit to your existing software? Are you in the build process and need to make sure accessibility has been appropriately considered? Use this checklist as a starting point.

We’ve already established the importance of user stories when it comes to the discovery phase of a digital project. However, user stories in a vacuum are not enough – left alone, they leave way too much room for assumption and error. And we all know that Assumptionville is where projects go to die.

So how do we avoid assumptions when it comes to user stories? Simple: add acceptance criteria to each one.

Acceptance Criteria: Definition and Importance

Let’s take a look at a sample user story I wrote about in our user stories article:

As a prospective buyer, I want to reset my account password if I’ve forgotten it so that I can access my account.

This user story, on the surface, is relatively easy to understand right? But when we start thinking about the nuts & bolts of the process, it quickly becomes clear that the user story doesn’t have enough details. How can we measure whether or not the user story has been properly met if we don’t know the details?

That’s exactly what acceptance criteria is designed to do: make clear the details that define the user story. If you stop and think about it, quite literally “acceptance criteria” is the criteria for a user story to be acceptable (or accepted by the product manager/client). More succinctly, the acceptance criteria is the functional requirements that a user story must meet for that user story to be marked complete.

It’s helpful to start with questions when we’re attempting to define acceptance criteria. Some questions the above user story elicit might be:

- Where do I reset my password?

- What steps must I take if my password is reset?

- How do we stop illegitimate actors from using this functionality?

- Does anyone need to be notified internally?

And from those items, we can begin to define the criteria that the user story can be built towards and tested against:

- A password reset is requested from a login screen.

- The user is prompted to enter identifying information associated with their account.

- When a password reset is requested, a notification is sent to the IT team to confirm its legitimacy.

- A unique password reset link is sent to the user.

- The password reset link is only valid for a set period of time.

- When the link is clicked, the user is prompted to enter and confirm their new password.

And so forth.

Now, thanks to a skillful approach to detailed technology consulting, all of a sudden the high-level user story has a set of additional details supporting it. Developers know what to build, designers know what to design, and expectations around functionality and effort are much more clear.

Further to that, when the user story moves into production and ultimately into the QA and UA testing phases, there is a clear set of criteria to test the user story against. After all, if you don’t know the criteria, how do you know if the item passed or failed?

Download The Free User Stories Spreadsheet

Manage your user stories! Make sure to get this free user stories worksheet – available in XLS, Numbers, and Google Sheets formats – so you can digitize your user stories, add acceptance criteria, and continually update them going forwards.

How to Format Acceptance Criteria

Much the same as the process of capturing user stories and their priorities, there is no singular “right” way to record acceptance criteria. However, there are some ways we know work – so let’s talk about those in the context of the overall user story process. No need to reinvent the wheel!

Remember how user stories use the following format?

As a <user persona> I want <functionality> so that <benefit / rationale>.

Your acceptance criteria can follow a similar format.

Given <some context>, when <this action is carried out>, then <this result happens>.

For the example above, the acceptance criteria could read like so:

- Given that a user has forgotten their password, when they request a password reset, then they are prompted to enter identifying information.

- Given that a user has requested a password reset, when they enter their identifying information, then they are sent a password reset link.

- Given that a user has clicked a password reset link, when they enter their new password, then they will need to re-enter the password to successfully reset their password.

- Given that a user has reset their password, when they go back to the log in screen, then their new password will allow them to log in.

- Given that a user has forgotten their password, when they request a password reset, then a notification is sent to the IT department.

A single user story can, and should, have multiple acceptance criteria. Acceptance criteria should be small and easily digestible, built to address the sub-stories within each user story. In our example, these are what the user needs to input to begin the password reset process, how they’ll move through the password reset flow, and the security relating to the password reset.

How to Capture Acceptance Criteria

As mentioned, we capture user stories in a workshop with our clients. That workshop covers a lot of ground – because of that, we often defer acceptance criteria to another conversation rather than try to do too much in a kickoff. The key word here, though, is conversation.

The best way to define acceptance criteria for a given user story is to have a conversation with the user (or, the knowledge holder / representative of that user) to whom that story relates. That might sound like a monolithic undertaking, but remember that there are usually around 3-5 user personas that should be focused on in a project.

What you can do, then, is grab your stack of prioritized user stories. I’d recommend just grabbing the 1s / Ms so you’re focusing strictly on the must-haves. Organize them by user persona (for example, here are all the user stories that relate to the IT department; here are all the user stories that relate to the Prospective Job Seeker; etc).

Then, book a meeting with each of those users. It can be an in-person meeting (recommended); a video call (second best); or a phone call (still useful). In this meeting, simply run through each user story one-by-one and ask questions designed to understand more details around the expected functionality. Have a conversation about each story.

It’s helpful to talk through the end-to-end process with the user/client – for example “Ok, how are users logging in? What happens if they can’t remember their credentials? Who is responsible for those requests? What happens next? Is this something we can automate? How will you know when a password has successfully been reset? What format should a notification come in?” Talking with the user about the entire process – the story of the functionality, as it were – and asking the right questions will uncover acceptance criteria.

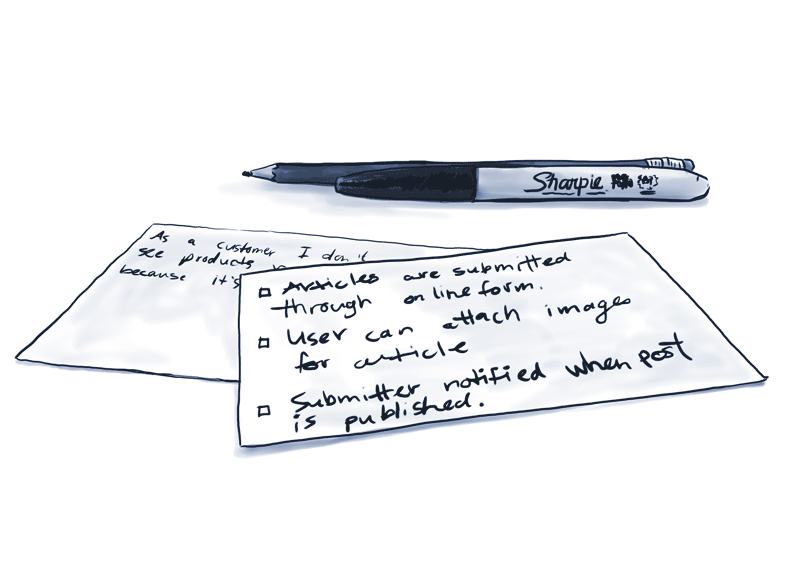

When you’re doing this, make sure to take a bunch of simple-language notes! Then, before moving on from a user story card, turn these notes into acceptance criteria for the story and make sure you come to a consensus in the conversation. You can write the criteria down on the back of the user story card, or if you’ve already transferred the user stories into digital format (for example, a spreadsheet or table), then capture the acceptance criteria in a related cell. If you don’t already have our digital user stories spreadsheet, you can download it above.

Then, go on to the next story. And the next. And the next. Then go to the next user group. Lather, rinse, repeat.

All of a sudden, you’ll have robust criteria to accompany each user story. Criteria that was collaboratively defined by the user. Criteria that can be estimated against, built towards, and tested.

Now? Your user stories are usable themselves. The discovery phase of your digital project can move into the imperative auditing step next. Learn what a Discovery Audit is, and how to run one, in the next chapter!